Cracking a difficult Vigenère Cipher

Last week I competed in the European Cyber Security Challenge, and I spent the first morning working on a “modified Vigenère” challenge. This post describes a highly effective method of cryptanalysing Vigenère ciphers and how it was adapted to solve the challenge.

Background

The Vigenère was invented in the 16th century and over the following centuries developed a reputation of being extremely hard to crack. As late as 1868, Alice in Wonderland author Lewis Carroll claimed the cipher was unbreakable, and in French it was referred to as “le chiffre indéchiffrable”. However, the reputation of the so-called “indecipherable cipher” was undeserved. Computing pioneer Charles Babbage broke it in the 1850s, and during the American Civil War, Vigenère messages sent by the Confederates were regularly intercepted and decrypted by the Union.

Before going any further, here is a refresher of how the cipher works:

Key: KEYKEYKEYKEYKEYKEYKEYKEYKEYKEYKEYKEYKEYKEYK

Plaintext: LECOEURASESRAISONSQUELARAISONNECONNAITPOINT

Ciphertext: VIAYISBEQOWPKMQYRQAYCVEPKMQYRLOGMXRYSXNYMLD

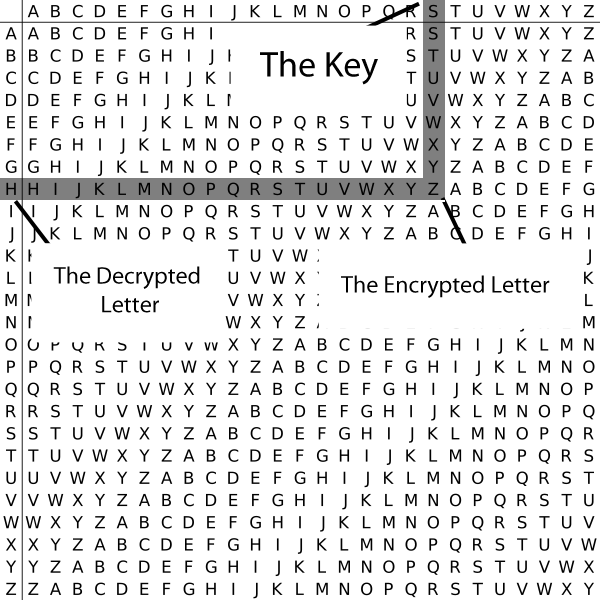

To produce each letter of the ciphertext, each key and plaintext letter is added together, using their positions in the alphabet, counting up from A as the ‘zeroth’ letter. Accordingly, K is the 10th letter in the alphabet and L is the 11th; these are added to get the 21st letter, V. If the addition goes over the end of the alphabet, it wraps back around using modular arithmetic: Y (24) + C (2) becomes 26 % 26 = 0 = A.

The defining characteristic of the Vigenère is that it is polyalphabetic; the same plaintext letter may map to a number of different ciphertext letters. This way, it achieves a very limited form of Claude Shannon’s confusion property of secure ciphers. In plain terms, this means that the relationship between the ciphertext and the key should be complex, so as to hide connections between the two. The fact that there is no direct mapping between individual letters in a Vigenère ciphertext and the key stumped cryptanalysts for years.

On the other hand, the Vigenère fails at the other crucial property of modern ciphers, diffusion, which refers to the importance of scattering the statistical structure of the plaintext over the entirety of the ciphertext. In an ideal cipher, a change of a single bit in the plaintext would change half the ciphertext, maximally concealing statistical patterns. The Vigenère is very bad at this because it has a repeating key, making statistical patterns detectable in the ciphertext. The shorter the key, the easier this is to see.

For instance, in our toy example, the plaintext L—like all the letters of the plaintext—can only map to three different ciphertext letters depending on which letter of the key it corresponds to at each position. Because of this, there are duplicate encryptions: L in the plaintext lines up with K in the key twice, producing an identical ciphertext of V both times. Early methods of cracking Vigenère ciphertexts focus on occurrences like this to determine the keylength. But there is a much stronger attack.

Finding the keylength

If we partition the ciphertext into three separate columns, the first consisting of letters encrypted by K, the second of letters encrypted by E, and the third of letters encrypted by Y, we essentially have three Caesar ciphers. Since a Caesar cipher is just a shift of all letters by the same value, the columns of ciphertext letters still retain the frequency distribution of the underlying plaintext language (French without diacritics in this case).

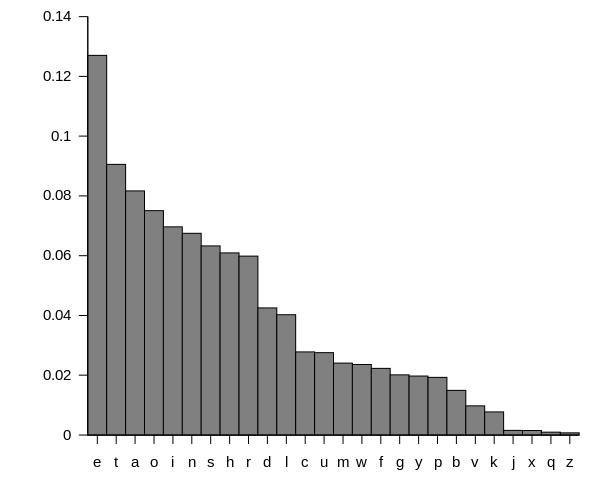

However, if we partition the ciphertext into a number of columns different from the keylength, and not a multiple of it, such as seven, we get something much more like random text than Caesar-shifted French. Because each column now contains letters encrypted by a number of different key shifts, there is no longer a pronounced frequency distribution. In a natural language text, some letters are common (E) and others are rare (Z). But in a large enough random text, one random letter is as equally likely to appear or “coincide” as any other.

If we test every possible keylength in this way and examine the resulting statistical distributions of letters, we can easily pick out the distribution that looks most like natural language. This is the intuition behind the index of coincidence attack on keylength, invented by chief NSA cryptographer William Friedman.

The following code implements this concept:

#!/usr/bin/python3

from collections import Counter

def ic(ctext):

num = 0.0

den = 0.0

for val in Counter(ctext).values():

i = val

num += i * (i - 1)

den += i

if den == 0.0:

return 0.0

else:

return num / (den * (den - 1))

def partition(text, num):

cols = [""] * num

for i, c in enumerate(text):

cols[i % num] += c

return cols

def find_keylen_ics(ctext, low=3, high=10, rows=5):

if high > len(ctext) / 2:

high = len(ctext) / 2

results = {}

for length in range(low, high + 1):

ics = [ic(col) for col in partition(ctext, length)]

results[length] = sum(ics) / len(ics)

best = sorted(results.items(), key=lambda kv: -kv[1])

print("%8s %8s" % ("keylen", "ic"))

for k, v in best[:rows]:

print("%8d %8.3f" % (k, v))

return best

ciphertext = "VIAYISBEQOWPKMQYRQAYCVEPKMQYRLOGMXRYSXNYMLDSLVIQORRORKSPJOGFYWCC"

find_keylen_ics(ciphertext)Output:

keylen ic

6 0.092

3 0.090

9 0.082

4 0.065

8 0.062

Even for such a short ciphertext, the find_keylen_ics function reports a keylength of three and its multiples as having the highest indices of coincidence. French literature actually has an index of coincidence of about 0.078. The result here is higher than that due to the limited character set and removal of diacritics, making “coincidences” more likely.

Cracking the key

Now that we know the keylength, and therefore the number of columns to partition the ciphertext into, the second part of the attack takes further advantage of the Caesar-like nature of these columns. Instead of just looking at the frequency distribution through the lens of a single statistic, we now consider the frequencies of individual plaintext letters.

For each column, we try every possible letter as the key letter for that column, and see which resulting decrypted text most resembles the target natural language. This is done by scoring common letters in the candidate plaintext highly, and penalising less common ones.

import operator

import string

def freq_score(text):

freqs = {

'a': 8167,

'b': 1492,

'c': 2782,

'd': 4253,

'e': 12702,

'f': 2228,

'g': 2015,

'h': 6094,

'i': 6966,

'j': 153,

'k': 772,

'l': 4025,

'm': 2406,

'n': 6749,

'o': 7507,

'p': 1929,

'q': 95,

'r': 5987,

's': 6327,

't': 9056,

'u': 2758,

'v': 978,

'w': 2360,

'x': 150,

'y': 1974,

'z': 74

}

score = 0

for c in text:

if c == ' ':

score += 10000

elif c.lower() in freqs:

score += freqs[c.lower()]

elif ord(c) >= 128:

score -= 5000

else:

score -= 1000

return score

def find_key(ctext, keylen, alph=string.ascii_lowercase):

key = ""

for col in partition(ctext, keylen):

scores = {}

for i, letter in enumerate(alph):

transposed = [alph[(alph.index(c.lower()) - i) %

len(alph)] for c in col]

scores[letter] = freq_score(transposed)

key += max(scores.items(), key=operator.itemgetter(1))[0]

return key

def decrypt(ctext, key, alph=string.ascii_lowercase):

return ''.join(alph[(alph.index(c.lower()) - alph.index(key[i % len(key)])) % len(alph)] for i, c in enumerate(ctext))

key = find_key(ciphertext, 3)

print(key)

print(decrypt(ciphertext, key))Output:

key

lecoeurasesraisonsquelaraisonneconnaitpointonlesentenmillechoses

Interestingly, this freq_score function, which is based on the frequency of English letters, works just as well here on French. One by one the best-scoring letter at each position of the key is found, until the cipher is completely cracked.

The Modified Vigenère

The above code works excellently on standard Vigenère ciphers, and there are plenty of online solvers out there implementing a similar logic. As predicted, the modified Vigenère cipher we were given in the competition included features designed to throw off traditional analysis.

We were provided a hexadecimally-encoded ciphertext file (modvigenere.cipher) and a C encryption tool (modvigenere.c). Here is the bulk of the encryption code:

int main(int argc, char *argv[])

{

unsigned char *key = NULL;

int keylen = 0;

unsigned char *buffer = NULL;

int n_read = 0;

int i;

unsigned char c;

if (argc != 2) {

printf("modvigenere key (hexstring)\n");

exit(1);

}

keylen = decode_hex(argv[1], &key);

buffer = (char *)malloc(keylen);

while ((n_read = read(0, buffer, keylen)) > 0) {

for (i=0; i<n_read; i++) {

switch (i % 8) {

case 0:

c = buffer[i] ^ key[i];

break;

case 1:

c = (buffer[i] + key[i]) % 256;

break;

case 2:

c = buffer[i] ^ key[i];

break;

case 3:

c = (buffer[i] - key[i]) % 256;

break;

case 4:

c = buffer[i] ^ key[i];

break;

case 5:

c = buffer[i] ^ key[i];

break;

case 6:

c = (buffer[i] + key[i]) % 256;

break;

case 7:

c = (buffer[i] - key[i]) % 256;

break;

}

printf("%02x", c);

}

if (n_read < keylen) {

exit(0);

}

}

}In summary, this main function:

- accepts a hexadecimally-encoded key as an argument.

- converts the key to an array of C integers from 0-255.

- sets up a character buffer the size of the keylength.

- loops over the following steps until running out of standard input:

- read standard input into the buffer.

- encode each character using a different operation on a rotation of eight.

- convert the integer result back to hexadecimal and print it.

The clear variation from the standard Vigenère is that different operations are used to encrypt depending on the position of the plaintext, rather than just addition modulo the size of the alphabet. These operations are xor, and addition and subtraction modulo 256.

This immediately prompted a few observations. The fact that the key is hexadecimally-encoded, plus encryption operations occur modulo 256, indicated that the key comprises characters in the range of extended ASCII (0-255) rather than alphabetic letters. The rotation of 8 different operations suggested a keylength that is a multiple of 8.

Despite the different encryption operations used, the index of coincidence test for keylength—applied after hex-decoding the ciphertext—still returned a strong result:

import re

with open('modvigenere.cipher') as f:

modvigenere = f.read()

ciphertext = [chr(int(a, 16)) for a in re.findall('.{2}', modvigenere)]

print(find_keylen_ics(ciphertext, high=30))Output:

keylen ic

26 0.061

13 0.060

4 0.007

5 0.007

6 0.007

Thirteen and its multiples had by far the best indices of coincidence, close to that of English (0.067). This was a good start, however it scuttled my assumption that the keylength would be a multiple of eight. I was uncertain about the next step due to the different periods of the key (13) and encryption operations (8). One thought was that this essentially made the key 13*8 = 104 characters long. However, looking back at the encryption code, I was reminded that the input buffer was only 13 characters, so the rotation of operations ‘reset’ every 13 characters. To illustrate:

Key: ABCDEFGHIJKLMABCDEFGHIJKLM

Operation: ^+^-^^+-^+^-^^+^-^^+-^+^-^

This also explained why the find_keylen_ics function worked so well. A correct keylength would partition the ciphertext into columns each associated with only one key letter and operation. An incorrect keylength would jumble the letters and operations, leading to a fairly even distribution of characters all over the extended ASCII range. Due to the larger alphabet, this would result in indices of coincidence even lower than random English text.

The next step was to write a variation on the find_key method from above. It’s cleanest to pass the relevant operator into a separate function that rates each letter on frequency score and returns the best match. Python’s operator library conveniently provides this facility:

def find_key_letter(col, op):

best_score = 0

best_char = 0

for i in range(256):

decoded = ''.join(chr(op(ord(a), i) % 256) for a in col)

if freq_score(decoded) > best_score:

best_score = freq_score(decoded)

best_char = i

return best_char

def modvigenere_find_key(ctext, keylen):

key = []

for i, col in enumerate(partition(ctext, keylen)):

if i % 8 == 0: key.append(find_key_letter(col, operator.xor))

if i % 8 == 1: key.append(find_key_letter(col, operator.sub))

if i % 8 == 2: key.append(find_key_letter(col, operator.xor))

if i % 8 == 3: key.append(find_key_letter(col, operator.add))

if i % 8 == 4: key.append(find_key_letter(col, operator.xor))

if i % 8 == 5: key.append(find_key_letter(col, operator.xor))

if i % 8 == 6: key.append(find_key_letter(col, operator.sub))

if i % 8 == 7: key.append(find_key_letter(col, operator.add))

return key

key = modvigenere_find_key(ciphertext, 13)

print(key)The final step was to use the found key to decrypt the ciphertext. Which sounds easy in theory, but in practice a bug in my code caused only parts of the plaintext to appear until a teammate looked through and fixed it, and the cipher was solved, revealing a nice hacking reference.

The decryption function below works similarly to modvigenere_find_key, although it resets the key index each 13 characters. It also uses a string rather than an array to store the result as we expect printable plaintext letters to be output. decrypt_or_default is a handy intermediate function that returns a dot if the candidate plaintext character doesn’t look printable, which avoids filling the terminal with garbage.

def decrypt_or_default(c, op, k, default='.'):

p = chr(op(c, k) % 256)

if p in string.printable:

return p

else:

return default

def modvigenere_decrypt(ctext, key):

plaintext = ""

for i, c in enumerate(ctext):

i %= len(key)

c = ord(c)

k = key[i]

if i % 8 == 0: plaintext += decrypt_or_default(c, operator.xor, k)

if i % 8 == 1: plaintext += decrypt_or_default(c, operator.sub, k)

if i % 8 == 2: plaintext += decrypt_or_default(c, operator.xor, k)

if i % 8 == 3: plaintext += decrypt_or_default(c, operator.add, k)

if i % 8 == 4: plaintext += decrypt_or_default(c, operator.xor, k)

if i % 8 == 5: plaintext += decrypt_or_default(c, operator.xor, k)

if i % 8 == 6: plaintext += decrypt_or_default(c, operator.sub, k)

if i % 8 == 7: plaintext += decrypt_or_default(c, operator.add, k)

return plaintext

print(modvigenere_decrypt(ciphertext, key))Output:

[69, 51, 170, 26, 190, 211, 28, 35, 159, 239, 52, 253, 109]

In 1969, students Martin Brice and Cosmo are sneakers who hack into computer networks using university equipment...

Conclusion

This was a fun challenge that made us think about the most optimal ways to exploit the weaknesses of classical ciphers. Several other teams struggled with the challenge and complained that it was impossible, which felt good to hear hours after we had cracked it!

The attack implementation will doubtless come in useful in future CTFs as well as for a personal project I have in mind. I would one day like to write a definitive open source classical cipher cryptanalysis program, borrowing ideas from CryptoCrack and CyberChef and written in a trendy language like Rust. Update: I’ve since been focussing my efforts on CryptoHack, and CrypTool 2 already does what I said.

The full code for this post is available on Github.